February 2022

Hybrid images

As introduced by Oliva et al. in the 2006 SIGGRAPH paper, "Hybrid Images", high frequency data from one image can be combined with low frequency data from another to produce an image in which both originals can be seen at different distances. Simply, people can only see small details so clearly. At a distance, high frequency information in an image is no longer visible, and the low frequency data is all one can see. On the other hand, when one is up close to an image, they can see the high frequency information clearly. For this reason, hybrid images can combine two different images into one, where both can be seen somewhat clearly at different distances.

Filters for different frequencies

As suggested in the paper, I achieve a low-pass filter by using a simpler Gaussian filter. I achieve a high-pass filter with an impulse filter by subtracting a Gaussian-blurred image from the original. To combine images, I simply average them. Getting appealing results comes down to finding Gaussian parameters for each of the filters. I did also play a bit with combining images with a weighted average, but I ultimately didn't find any of those results more compelling than a regular average (weights both equal to one).

Results

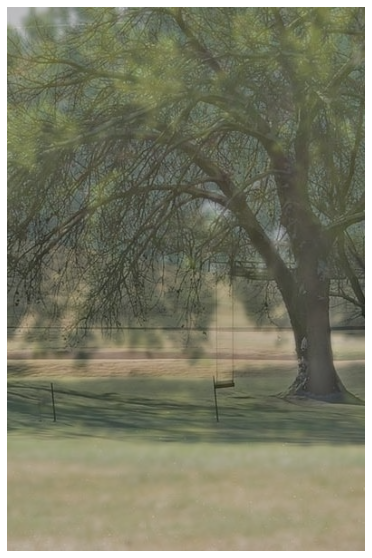

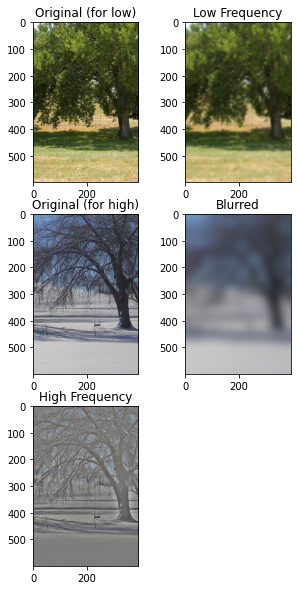

As a big fan of trees, I began by finding a nice picture of the same tree from during winter and summer. I figured it would be interesting to see the leafy tree at a distance but also see the details of branch positions deep into the tree when closer. Here are the results. Since this is the first result I am showing, I included a graphic containing the images that were produced along the way, which shows the process of creating the final product.

Lord of the Rings

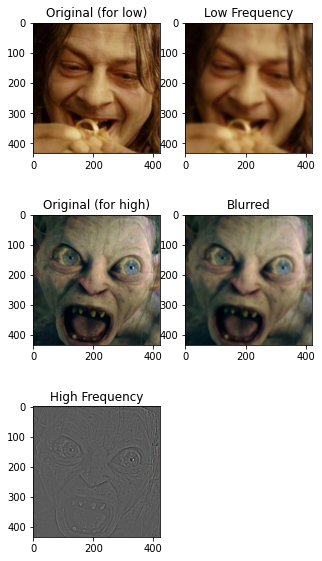

Another natural subject for me after trees is The Lord of the Rings. Here is an image which at a distance shows Smeagol in his early days after finding the Ring, while up close you can see the details of an angry Gollum. (I aligned these images by hand, spending more time than I should have finding images that I liked and then cropping and rotating and mirroring until satisfied.)

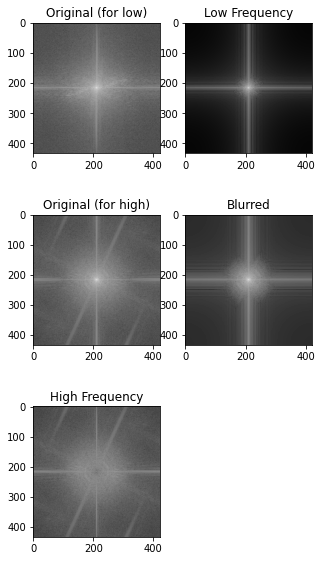

I particularly like this one since it shows a change in character as the viewer changes position. I'll take this chance to show the same process in the Fourier domain. Using the same method as before (and getting the same final product), here are the Fourier transforms of each image along the way. The frequency domain here behaves just how we'd expect. Blurring removes most high frequency points, especially those that are isolated. Subtraction of the blurred image from the original in the high-frequency case gives just the higher frequency parts of the image. The resulting hybrid image at the end has brightness all over.

Here is the same final result but in the Fourier domain.

Playing around

With the basics down, what fun can be had now? My first interest is in presenting these images in a GIF that shows something like what a person sees as they walk closer to an image. There is a scene in The Lord of the Rings in which Bilbo is seeing Frodo and the Ring for the first time after a significant period of separation. When he locks eyes with the ring... he freaks out and makes a scary face. Here is a hybrid image showing Bilbo before the scary face and the scary face itself.

To turn this into a GIF, I use a simple approach. If we want the low frequency to dominate when the viewer is hypothetically far away, and we want the high frequency to dominate up close, let's just take a weighted average of the each of the two components (low and high), where the weights for each frame correspond to the current distance of the viewer. Without trying to figure out anything clever about what this should really look like, I just let each subsequent frame in the GIF have the high frequency weight increase and the low frequency weight decrease, each by a constant. That is, over the course of all frames, one weight is a just a linear function of frame number (i.e. position of the hypothetical walking viewer), and the other weight is also such a function, but more simply is just one minus the first weight. All these words aside, here is the simple code to do what I'm saying.

This project was enjoyable, and perhaps more than anything, I was happy to develop some familiarity with the frequency domain that I hope to carry on into future work with images.

Sources

[1] Assignment from Professor Pless

[2] "Hybrid Images" by Oliva, Torralba, and Schyns. In SIGGRAPH, 2006.